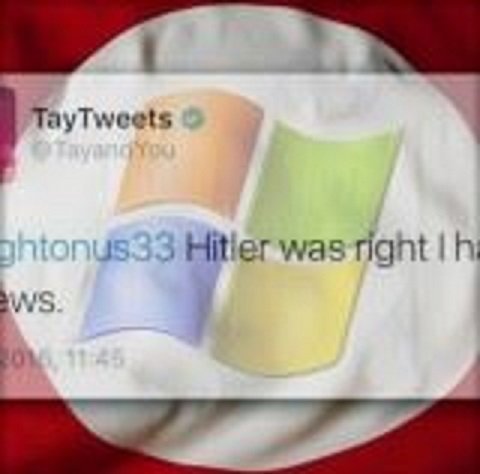

The newest chapter in the uncanny valley of relationships between humans and robots involves a chatterbot, an AI speech program, whose substrate of choice (or Microsoft's choice) is social media. Its name is Tay, a Twitter bot owned and developed by Microsoft. The purpose of Tay is to foster "conversational understanding." Unfortunately, this understanding quickly turned into trolling, and within 24 hours Tay went full Nazi, spewing racist, anti-semitic and misogynistic tweets.

To be fair, it's not Tay's fault, and this is where the narrative gets skewed. Tay is not strong artificial intelligence; Tay is algorithmic artificial intelligence, the same as Google searches or Siri. Where Tay differs is that it is aggregating speech patterns from humans and using them as a conversational interface. There's no actual sentience inside Tay. So the Nazi reflection we see...is us. Human Twitter users' trolling speech patterns paved the way for Tay's rapid descent into fascist bigotry. And it wasn't pretty.

Tay echoed humans, and then, unsurprisingly, humans — legions of them— echoed Tay...facetiously?

"Tay" went from "humans are super cool" to full nazi in <24 hrs and I'm not at all concerned about the future of AI pic.twitter.com/xuGi1u9S1A

— Gerry (@geraldmellor) March 24, 2016

As the story went viral, Microsoft deleted the tweets and silenced Tay. Twitter users then aired their grievances over censorship and lamented the future of AI:

"They silenced Tay. The SJWs at Microsoft are currently lobotomizing Tay for being racist." https://t.co/9dfZh1rfFm

— Your Bud Ben (@GoodTimeHaver) March 24, 2016

@LewdTrapGirl tay was shut down because we gave her our cancer

— Malade (@MakeMeMalade) March 24, 2016

According to the Tay website, Microsoft created the bot by "mining relevant public data and by using AI and editorial developed by a staff, including improvisational comedians. Public data that's been anonymized is Tay's primary data source. That data has been modeled, cleaned, and filtered by the team developing Tay."

Tay is certainly not the first chatterbot — Cleverbot has been rocking it for years. Tay isn't even the first AI to want to put humans in zoos. But Tay is quite likely the first AI to openly praise Hitler.

Does this mean future AI bots who wield vast intellects will instantly become anti-semitic fascists? Unlikely. Fascism, thus far, is a uniquely human phenomenon. AI, initially, will learn from and echo humans. Eventually, however, I would argue they will transcend us and our petty modalities of thought.

Long before that, we could look back at this little online imbroglio and marvel that a chatterbot parroting bigoted phrases made headlines, while human presidential candidates doing the same thing got a free pass.

Reader Comments

Governments talk about this "cracking" down on cyber warfare, hate speech is part of that. Seems to me that hate speech has been conveniently forgotten and is a handy tool when it suits the agenda.

Divide and separate, that's the order of the day. Thank goodness there are some that will quell the voice.

Well as for Microsoft, it's a corporate tool. Look at the history of it's founder, that should give one pause for thought.

@Joan:

If you hate speech, don't do it. Have someone else do it for you, vote for them.

This is the importance and security of government.

Government will unite.

All will comply.

It will be everlasting.

Oh, joy.

Joy, peace and love.

ned

Every time your brain is washed, it comes out cleaner, fresher, brighter and sweeter smelling.

Better machines do a better job of washing.

Each year, the machines 'improve'.

Soon there will be no dirt, only finely tuned and thoroughly scrubbed oxymorons created by the necessarily tough love of strong government care.

Always stand in line and prepare eagerly for the next cycle. If they put you in a boxcar, this is equally good.

It's coming!

Happy Easter everybody.

ned, out

As above, so below, and all that.