The research was compiled by two atmospheric scientists at the University of Alabama in Huntsville, Dr. Roy Spencer and Professor John Christy. They used a dataset of urbanisation changes called 'Built-Up' to determine the average effect that urbanisation has had on surface temperatures. Urbanisation differences were compared to temperature differences from closely spaced weather stations. The temperature plotted was in the morning during the summertime. A full methodology of the project is shown here in a posting on Dr. Spencer's blog.

Dr. Spencer believes that the 'Built-Up' dataset, which extends back to the 1970s, will be useful in 'de-urbanising' land-based surface temperature measurements in the U.S. as well as other countries. All the major global datasets use temperature measurements from the Integrated Surface Database (ISD), and all have undertaken retrospective upward adjustments in the recent past. In the U.K., the Met Office removed a 'pause' in global temperatures from 1998 to around 2010 by two significant adjustments to its HadCRUT database over the last 10 years. The adjustments added about 30% warming to the recent record. Removing the recent adjustments would bring the surface datasets more into line with the accurate measurements made by satellites and meteorological balloons.

Of course, if the objective is to promote a command-and-control Net Zero project, using widespread fear of rising temperatures to mandate huge societal and economic changes, a little extra warming would appear useful. But warming on a global scale started to run out of steam over 20 years ago, and the stunt can only be pulled for so long before the disconnect with reality becomes too obvious. There is a danger that the integrity of the surface measurements will be put on the line. Earlier this year, two top atmospheric scientists, Emeritus Professors William Happer and Richard Lindzen, told a U.S. Government enquiry that "climate science is awash with manipulated data, which provides no reliable scientific evidence".

As regular readers will recall, this is not the first time that the NOAA U.S. surface database has been under fire. The U.S. meteorologist Anthony Watts recently published a critical report calling it "fatally flawed". He found that 96% of U.S. temperature stations failed to meet what NOAA itself considered to be acceptable and uncorrupted placement standards. Watts defined 'corruption' has being caused by the localised effects of urbanisation. His report also drew attention to a rarely-publicised NOAA database compiled from 114 nationwide stations designed to provide continuous recordings away from urban heat distortions. The measurements started in 2005, and to date show little if any warming.

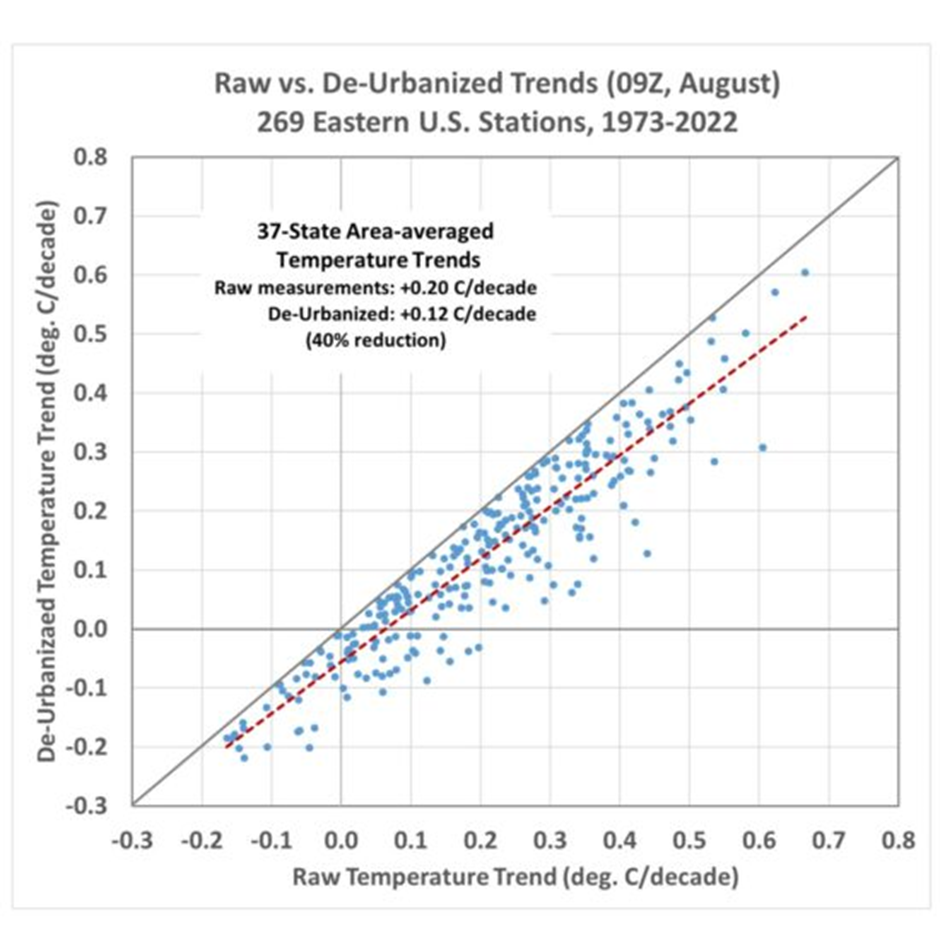

Below is the graph plotted by Dr. Spencer showing the 'raw' measurements taken from the ISD compared with his de-urbanised figures over 37 eastern states.

It can be seen that there is a dramatic fall in the warming trend over the last 50 years, down to only 0.12°C a decade. But the NOAA adjusted figures are even higher than the raw data. The official NOAA average is actually 0.24°C warming a decade, meaning the de-urbanised measurement is 50% lower than this.

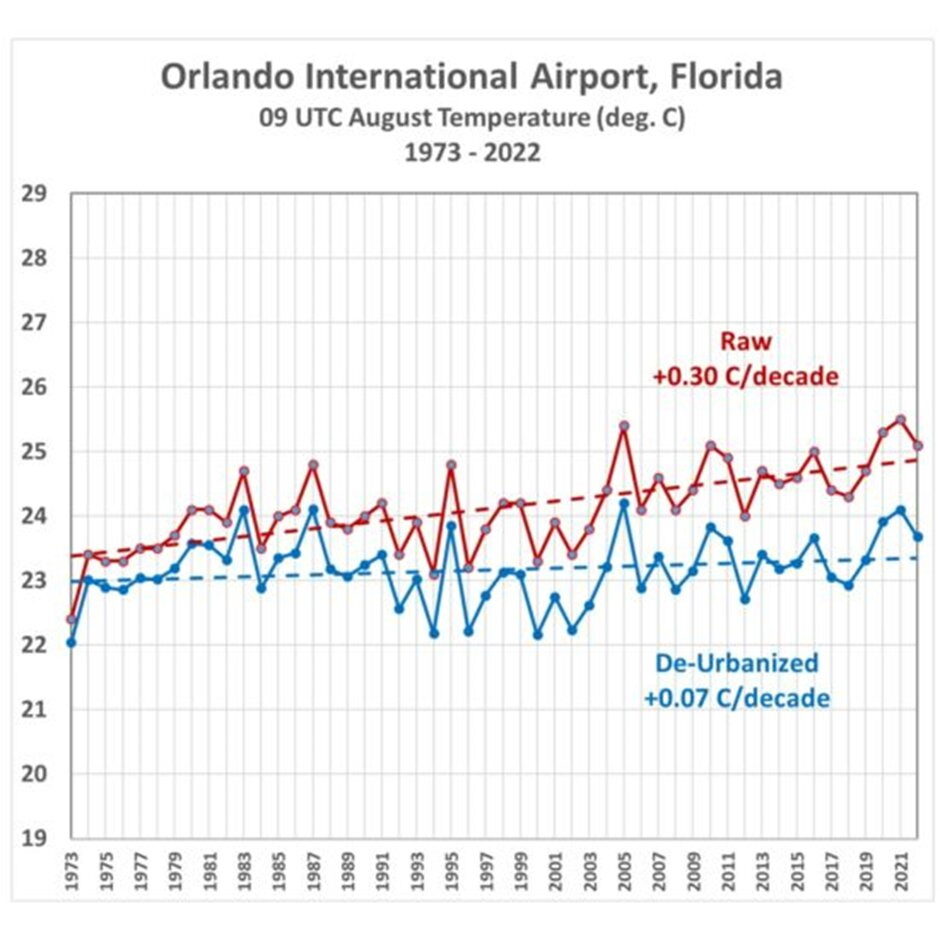

Airports are a favourite measuring place since they offer regular and accurate readings. Of course it has been noted that they are prone to enormous urban heat effects. Three of the top four temperature records during the last summer in the U.K. were set at airports. Here is why Heathrow airport should never again be used by the Met Office as a poster story for global warming.

The raw data for Orlando International Airport in Florida show a massive 0.3°C warming per decade, but this falls to a de-urbanised figure of just 0.07°C.

It is early days for Spencer's work in this field, and he reports that the 50% figure is likely to be the upper limit of de-urbanisation adjustments. Other times and months may be lower. But he notes that all CMIP6 climate models produce U.S. summertime temperature trends greater than NOAA observations, which means the "discrepancy between climate models and observations is even larger than currently suspected by many of us". Spencer ends by stating that John Christy and he, "believe it is time for a new surface temperature dataset, and the method outlined... looks like a viable approach to that end".

Meanwhile, COP27 starts at the weekend. It is a fair bet that every warning of future temperature rise will reference the politically-correct surface datasets, and every forecast of climate thermogeddon will incant the authority of climate models.

Chris Morrison is the Daily Sceptic's Environment Editor.

Reader Comments

Good language is always in need of improvement.

I got some ideas on this - do you balboa schwartz ?

I think you do based upon past conversations had.

How about some good ideas get some air....

a bit of discourse on this or that...

how about that...

balboa

....I can't find it, it is so hard to find articles on sott.net and that is a shame...

you remember I hope - twas the article with the linguistics lady about

new ideas and aspects of language not understood and

in that article, when we were posting I said

how wonderful it would be if we could

just share ideas with one another

and then find something

better...

mistakes made, errors occur, but just talking together in freedom allowing new and better ideas to emerge.

I remember the article balboa schwartz but I'm not going to post a link....I hope you remember as well.

Ken

Its a very good link about this how global warming has been greatly exaggerated.

an excerpt:

The first adjustment changed how the temperature of the ocean surface is calculated, by replacing satellite data with drifting buoys and temperatures in ships’ water intake. The size of the ship determines how deep the intake tube is, and steel ships warm up tremendously under sunny, hot conditions. The buoy temperatures, which are measured by precise electronic thermistors, were adjusted upwards to match the questionable ship data. Given that the buoy network became more extensive during the pause, that’s guaranteed to put some artificial warming in the data.

The second big adjustment was over the Arctic Ocean, where there aren’t any weather stations. In this revision, temperatures were estimated from nearby land stations. This runs afoul of basic physics.Even in warm summers, there’s plenty of ice over much of the Arctic Ocean. Now, for example, when the sea ice is nearing its annual minimum, it still extends part way down Greenland’s east coast. As long as the ice-water mix is well-stirred (like a glass of ice water), the surface temperature stays at the freezing point until all the ice melts. So, extending land readings over the Arctic Ocean adds nonexistent warming to the record.

Further, both global and United States data have been frequently adjusted. There is nothing scientifically wrong with adjusting data to correct for changes in the way temperatures are observed and for changes in the thermometers. But each serial adjustment has tended to make the early years colder, which increases the warming trend. That’s wildly improbable.

In addition, thermometers are housed in standardized instrument shelters, which are to be kept a specified shade of white. Shelters in poorer countries are not repainted as often, and darker stations absorb more of the sun’s energy. It’s no surprise that poor tropical countries show the largest warming from this effect.All this is to say that the weather balloon and satellite temperatures used in Christy’s testimony are the best data we have, and they show that the U.N.’s climate models just aren’t ready for prime time.

He and his companions... rock!

I really hate that I just strategically vote now for the candidate least likely to take away rights from all Americans that people who are honestly too dumb to vote think is a good thing.

...when is Comet Apophis due?