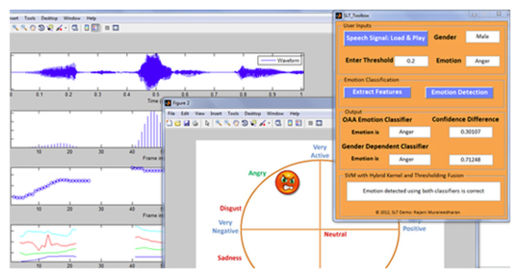

Researchers have created new software that is able to detect people's emotions from listening to their voices. "Basically, what we're doing is we're trying to use features of the voice, things like pitch and energy, to detect emotions from what someone is saying," said Wendi Heinzelman, the software's lead creator and a computer scientist and electrical engineer at the University of Rochester in New York.

The software doesn't need to gather information about what is actually being said; it just analyzes the tone of voice. It accurately pinpoints emotions - including sadness, happiness, fear and disgust - 81 percent of the time in people whose voices it has previously analyzed.

Heinzelman still has plenty of basic research to do to, but if development goes well, her discoveries could go into emotion-detecting apps for market research or for helping people analyze how they are perceived by others, she said. Her findings could also help robots understand human emotions, which might make robot caretakers or co-workers of the future easier to work with.

For psych studies and smartphone apps

For now, Heinzelman's focus is making software for her colleague Melissa Sturge-Apple, a psychologist at the University of Rochester. Sturge-Apple studies the interactions between teens and their parents. To do so, she tapes of hours of conversations, then hires trained loggers to watch the videos and take notes on the emotions they see. The process is time-consuming and not always accurate.

Heinzelman wants to make software that runs on a computer and that automates emotion-logging for psychology studies. Once she's done that, however, her lab would like to try to turn that software into an app that is able to run on a smartphone, she said. "Once we get this working for psychologists, there's no reason not to have a smartphone app," she said.

One of her students, Na Yang, has already made a simple prototype of such an app during an internship at Microsoft, Heinzelman said. Yang's app hasn't been vetted in detail, but it seems to work when people act out an emotion, Heinzelman said.

Work left to do

There are still some major questions to answer before Heinzelman's software is ready for Sturge-Apple. While it works well for people for whom it has received "training data" - samples of a person's voice that are labeled with the correct emotion - it's much less accurate at identifying emotions in a new person's voice. For people who are new to the software, it is able to detect the correct emotion 31 percent of the time.

It wouldn't be practical to get training data from each person who would interact with the software, however. Researchers would have to catch recordings of people in the midst of talking angrily, happily and with every other emotion. Heinzelman wants to make a program that uses a limited set of training data to identify anybody's emotions.

Heinzelman and her team may ultimately find that it's impossible to create such a program, however. "That's one of the questions we have to explore. Is it possible to create this generic system that can get emotion?" she said.

To improve her program's ability to detect emotions in new voices, Heinzelman said she wants to see if it helps to identify people within smaller groups, such as age or gender groups. A female-only training dataset might help the program accurately detect emotions in new female voices, for example.

She is also working on ensuring the program works in a noisy environment. For now, it's only been shown to work in quiet places.

Heinzelman and her team presented their work Dec. 5 at a speech technology conference in Miami.

Reader Comments

to our Newsletter