Over the last 50 years, we argue that incentives for academic scientists have become increasingly perverse in terms of competition for research funding, development of quantitative metrics to measure performance, and a changing business model for higher education itself. Furthermore, decreased discretionary funding at the federal and state level is creating a hypercompetitive environment between government agencies (e.g., EPA, NIH, CDC), for scientists in these agencies, and for academics seeking funding from all sources-the combination of perverse incentives and decreased funding increases pressures that can lead to unethical behavior. If a critical mass of scientists become untrustworthy, a tipping point is possible in which the scientific enterprise itself becomes inherently corrupt and public trust is lost, risking a new dark age with devastating consequences to humanity. Academia and federal agencies should better support science as a public good, and incentivize altruistic and ethical outcomes, while de-emphasizing output.

Comment: We may already be at that threshold if not already.

Introduction

The incentives and reward structure of academia have undergone a dramatic change in the last half century. Competition has increased for tenure-track positions, and most U.S. PhD graduates are selecting careers in industry, government, or elsewhere partly because the current supply of PhDs far exceeds available academic positions (Cyranoski et al., 2011; Stephan, 2012a; Aitkenhead, 2013; Ladner et al., 2013; Dzeng, 2014; Kolata, 2016). Universities are also increasingly "balance<ing> their budgets on the backs of adjuncts" given that part-time or adjunct professor jobs make up 76% of the academic labor force, while getting paid on average $2,700 per class, without benefits or job security (Curtis and Thornton, 2013; U.S. House Committee on Education and the Workforce, 2014). There are other concerns about the culture of modern academia, as reflected by studies showing that the attractiveness of academic research careers decreases over the course of students' PhD program at Tier-1 institutions relative to other careers (Sauermann and Roach, 2012; Schneider et al., 2014), reflecting the overemphasis on quantitative metrics, competition for limited funding, and difficulties pursuing science as a public good.

In this article, we will

- describe how perverse incentives and hypercompetition are altering academic behavior of researchers and universities, reducing scientific progress and increasing unethical actions,

- propose a conceptual model that describes how emphasis on quantity versus quality can adversely affect true scientific progress,

- consider ramifications of this environment on the next generation of Science, Technology, Engineering and Mathematics (STEM) researchers, public perception, and the future of science itself, and finally,

- offer recommendations that could help our scientific institutions increase productivity and maintain public trust. We hope to begin a conversation among all stakeholders who acknowledge perverse incentives throughout academia, consider changes to increase scientific progress, and uphold "high ethical standards" in the profession (NAE, 2004).

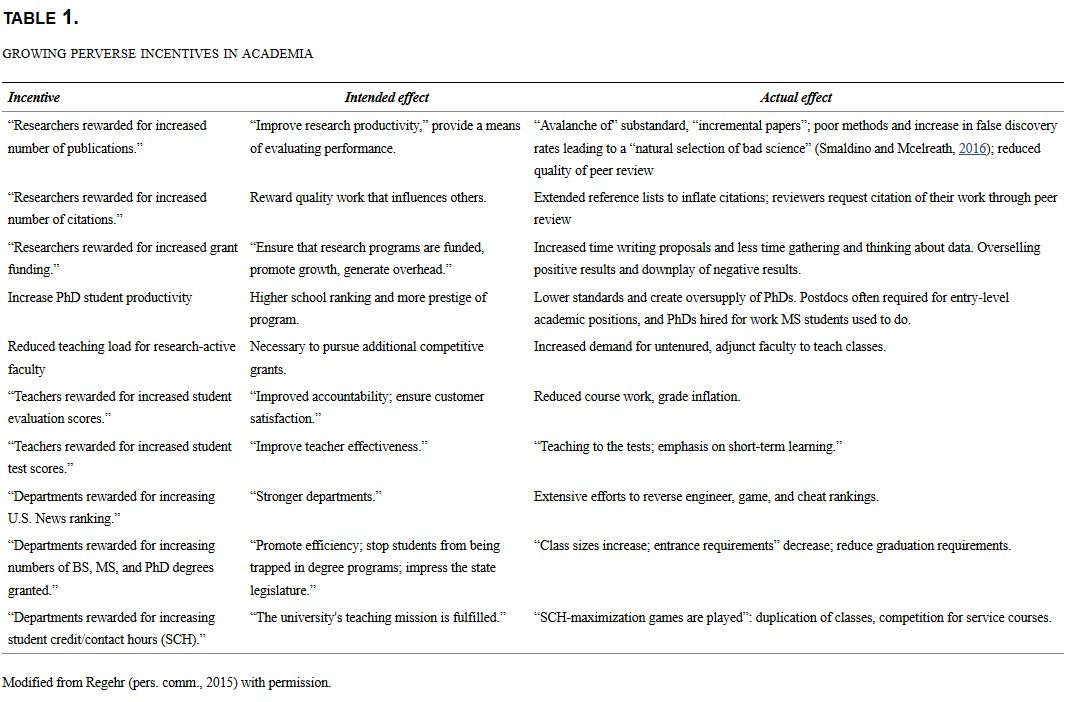

When you rely on incentives, you undermine virtues. Then when you discover that you actually need people who want to do the right thing, those people don't exist...-Barry Schwartz, Swarthmore College (Zetter, 2009)Academics are human and readily respond to incentives. The need to achieve tenure has influenced faculty decisions, priorities, and activities since the concept first became popular (Wolverton, 1998). Recently, however, an emphasis on quantitative performance metrics (Van Noorden, 2010), increased competition for static or reduced federal research funding (e.g., NIH, NSF, and EPA), and a steady shift toward operating public universities on a private business model (Plerou, et al., 1999; Brownlee, 2014; Kasperkevic, 2014) are creating an increasingly perverse academic culture. These changes may be creating problems in academia at both individual and institutional levels (Table 1).

Ultimately, the well-intentioned use of quantitative metrics may create inequities and outcomes worse than the systems they replaced. Specifically, if rewards are disproportionally given to individuals manipulating their metrics, problems of the old subjective paradigms (e.g., old-boys' networks) may be tame by comparison. In a 2010 survey, 71% of respondents stated that they feared colleagues can "game" or "cheat" their way into better evaluations at their institutions (Abbott, 2010), demonstrating that scientists are acutely attuned to the possibility of abuses in the current system.

Quantitative metrics are scholar centric and reward output, which is not necessarily the same as achieving a goal of socially relevant and impactful research outcomes. Scientific output as measured by cited work has doubled every 9 years since about World War II (Bornmann and Mutz, 2015), producing "busier academics, shorter and less comprehensive papers" (Fischer et al., 2012), and a change in climate from "publish or perish" to "funding or famine" (Quake, 2009; Tijdink et al., 2014). Questions have been raised about how sustainable this exponential increase in the knowledge industry is (Price, 1963; Frodeman, 2011) and how much of the growth is illusory and results from manipulation as per Goodhart's Law.

Recent exposés have revealed schemes by journals to manipulate impact factors, use of p-hacking by researchers to mine for statistically significant and publishable results, rigging of the peer-review process itself, and overcitation (Falagas and Alexiou, 2008; Labbé, 2010; Zhivotovsky and Krutovsky, 2008; Bartneck and Kokkelmans, 2011; Delgado López-Cózar et al., 2012; McDermott, 2013; Van Noorden, 2014; Barry, 2015). A fictional character was recently created to demonstrate a "spamming war in the heart of science," by generation of 102 fake articles and a stellar h-index of 94 on Google Scholar (Labbé, 2010). Blogs describing how to more discretely raise h-index without committing outright fraud are also commonplace (e.g., Dem, 2011).

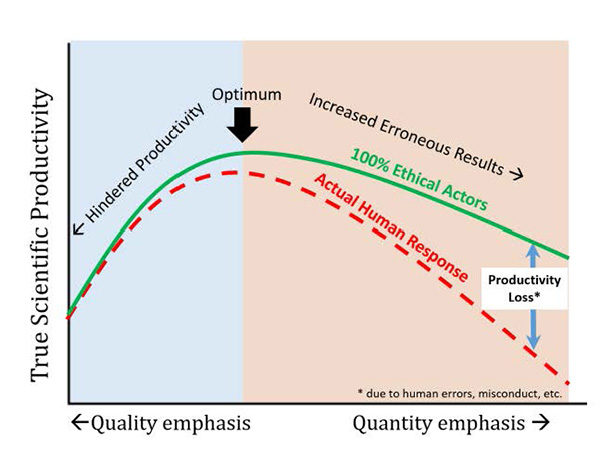

It is instructive to conceptualize the basic problem from a perspective of emphasizing quality-in-research versus quantity-in-research, as well as effects of perverse incentives (Fig. 1). Assuming that the goal of the scientific enterprise is to maximize true scientific progress, a process that overemphasizes quality might require triple or quadruple blinded studies, mandatory replication of results by independent parties, and peer-review of all data and statistics before publication-such a system would minimize mistakes, but would produce very few results due to overcaution (left Fig. 1). At the other extreme, an overemphasis on quantity is also problematic because accepting less scientific rigor in statistics, replication, and quality controls or a less rigorous review process would produce a very high number of articles, but after considering costly setbacks associated with a high error rate, true progress would also be low. A hypothetical optimum productivity lies somewhere in between, and it is possible that our current practices (enforced by peer review) evolved to be near the optimum in an environment with fewer perverse incentives.

While there is virtually no research exploring the impact of perverse incentives on scientific productivity, most in academia would acknowledge a collective shift in our behavior over the years (Table 1), emphasizing quantity at the expense of quality. This issue may be especially troubling for attracting and retaining altruistically minded students, particularly women and underrepresented minorities (WURM), in STEM research careers. Because modern scientific careers are perceived as focusing on "the individual scientist and individual achievement" rather than altruistic goals (Thoman et al., 2014), and WURM students tend to be attracted toward STEM fields for altruistic motives, including serving society and one's community (Diekman et al., 2010, Thoman et al., 2014), many leave STEM to seek careers and work that is more in keeping with their values (e.g., Diekman et al., 2010; Gibbs and Griffin, 2013; Campbell, et al., 2014).

Thus, another danger of overemphasizing output versus outcomes and quantity versus quality is creating a system that is a "perversion of natural selection," which selectively weeds out ethical and altruistic actors, while selecting for academics who are more comfortable and responsive to perverse incentives from the point of entry. Likewise, if normally ethical actors feel a need to engage in unethical behavior to maintain academic careers (Edwards, 2014), they may become complicit as per Granovetter's well-established Threshold Model of Collective Behavior (1978). At that point, unethical actions have become "embedded in the structures and processes" of a professional culture, and nearly everyone has been "induced to view corruption as permissible" (Ashforth and Anand, 2003).

It is also telling that a new genre of articles termed "quit lit" by the Chronicle of Higher Education has emerged (Chronicle Vitae, 2013-2014), in which successful, altruistic, and public-minded professors give perfectly rational reasons for leaving a profession they once loved-such individuals are easily replaced with new hires who are more comfortable with the current climate. Reasons for leaving range from a saturated job market, lack of autonomy, concerns associated with the very structure of academe (CHE, 2013), and "a perverse incentive structure that maintains the status quo, rewards mediocrity, and discourages potential high-impact interdisciplinary work" (Dunn, 2013).

Summary

While quantitative metrics provide an objective means of evaluating research productivity relative to subjective measures, now that they have become a target, they cease to be useful and may even be counterproductive. A continued overemphasis on quantitative metrics will pressure all but the most ethical scientists, to overemphasize quantity at the expense of quality, create pressures to "cut corners" throughout the system, and select for scientists attracted to perverse incentives.

Scientific societies, research institutions, academic journals and individuals have made similar arguments, and some have signed the San Francisco Declaration of Research Assessment (DORA). The DORA recognizes the need for improving "ways in which output of scientific research are evaluated" and calls for challenging research assessment practices, especially the JIF, which are currently in place. Signatories include the American Society for Cell Biology, American Association for the Advancement of Science, Howard Hughes Medical Institute, and Proceedings of The National Academy of Sciences, among 737 organizations and 12,229 individuals as of June 30, 2016. Indeed, publishers of Nature, Science, and other journals have called for downplaying the JIF metric, and the American Society of Microbiology is announcing plans to "purge the conversation of the impact factor" and remove them from all their journals (Callaway, 2016). The argument is not to get rid of metrics, but to reduce their importance in decision-making by institutions and funding agencies, and perhaps invest resources toward creating more meaningful metrics (ACSB, 2012). DORA would be a step in the right direction of halting the "avalanche" of performance metrics dominating research assessment, which are unreliable and have long been hypothesized to threaten the quality of research (Rice, 1994; Macilwain, 2013).

Performance metrics: effect on institutions

We had to get into the top 100. That was a life-or-death matter for Northeastern.-Richard Freeland, Former President of Northeastern University (Kutner, 2014)The perverse incentives for academic institutions are growing in scope and impact, as best exemplified by U.S. News & World Report annual rankings that purportedly measure "academic excellence" (Morse, 2015). The rankings have strongly influenced, positively or negatively, public perceptions regarding the quality of education and opportunities they offer (Casper, 1996; Gladwell, 2011; Tierney, 2013). Although U.S. News & World Report rankings have been dismissed by some, they still undeniably wield extraordinary influence on college administrators and university leadership-the perceptions created by the objective quantitative ranking determines "how students, parents, high schools, and colleges pursue and perceive education" in practice (Kutner, 2014; Segal, 2014).

The rankings rely on subjective proprietary formula and algorithms, the original validity of which has since been undermined by Goodhart's law-universities have attempted to game the system by redistributing resources or investing in areas that the ranking metrics emphasize. Northeastern University, for instance, unapologetically rose from #162 in 1996 to #42 in 2015 by explicitly changing their class sizes, acceptance rates, and even peer assessment. Others have cheated by reporting incorrect statistics (Bucknell University, Claremont-McKenna College, Clemson University, George Washington University, and Emory University are examples of those who were caught) to rise in the ranks (Slotnik and Perez-Pena, 2012; Anderson, 2013; Kutner, 2014). More than 90% of 576 college admission officers thought other institutions were submitting false data to U.S. News according to a 2013 Gallup and Inside Higher Ed poll (Jaschik, 2013), which creates further pressures to cheat throughout the system to maintain a ranking perceived to be fair as discussed in preceding sections.

Hypercompetitive funding environments

If the work you propose to do isn't virtually certain of success, then it won't get funded-Roger Kornberg, Nobel laureate (Lee, 2007)

The only people who can survive in this environment are people who are absolutely passionate about what they're doing and have the self-confidence and competitiveness to just go back again and again and just persistently apply for funding-Robert Waterland, Baylor College of Medicine (Harris and Benincasa, 2014)The federal government's role in financing research and development (R&D), creating new knowledge, or fulfilling public missions like national security, agriculture, infrastructure, and environmental health has become paramount. The cost of high-risk, long-term research, which often has uncertain prospects and/or utility, has been largely borne by the U.S. government in the aftermath of World War II, forming part of an ecosystem with universities and industries contributing to the collective progress of mankind (Bornmann and Mutz, 2015; Hourihan, 2015).

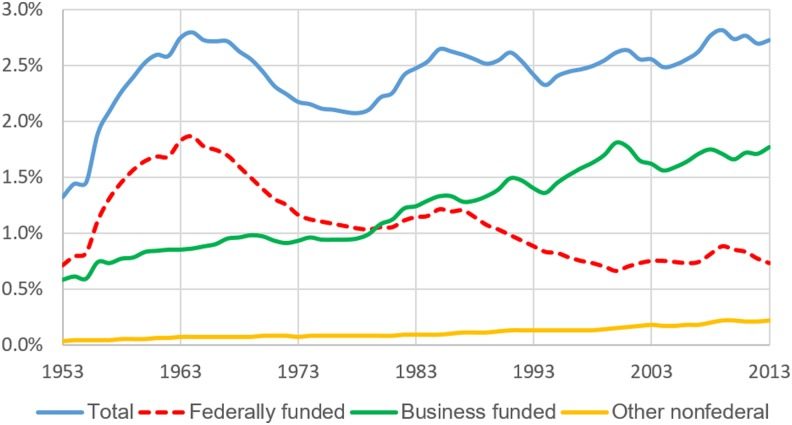

However, in the current competitive global environment where China is projected to outspend the U.S. on R&D by 2020, some worry that the "edifice of American innovation rests on an increasingly rickety foundation" because of a decline in spending on federal R&D in the past decade (Casassus, 2014; OECD, 2014; MIT, 2015; Porter, 2015). U.S. "Research Intensity" (i.e., federal R&D as a share of the country's gross domestic product or GDP) has declined to 0.78% (2014), which is down from about 2% in the 1960 s (Fig. 2). With discretionary spending of federal budgets projected to decrease, research intensity is likely to drop even further, despite increased industry funding (Hourihan, 2015).

The static or declining federal investment in research has created the "worst research funding <scenario in 50 years>" and further ratcheted competition for funding (Lee, 2007; Quake, 2009; Harris and Benincasa, 2014; Schneider et al., 2014; Stein, 2015), given that the number of researchers competing for grants is rising. The funding rate for NIH grants fell from 30.5% to 18% between 1997 and 2014, and the average age for a first time PI on an R01-equivalent grant has increased to 43 years (NIH, 2008, 2015). NSF funding rates have remained stagnant between 23 and 25% in the past decade (NSF, 2016). While these funding rates are still well above the breakeven point of 6%, at which the net cost of proposal writing equals the net value obtained from a grant by the grant winner (Cushman et al., 2015), there is little doubt the grant environment is hypercompetitive, susceptible to reviewer biases, and strongly dependent on prior success as measured by quantitative metrics (Lawrence, 2009; Fang and Casadevall, 2016). Researchers must tailor their thinking to align with solicited funding, and spend about half of their time addressing administrative and compliance, drawing focus away from scientific discovery and translation (NSB, 2014; Schneider et al., 2014; Belluz et al., 2016).

Systemic Risks to Scientific Integrity

Science is a human endeavor, and despite its obvious historical contributions to advancement of civilization, there is growing evidence that today's research publications too frequently suffer from lack of replicability, rely on biased data-sets, apply low or substandard statistical methods, fail to guard against researcher biases, and their findings are overhyped (Fanelli, 2009; Aschwanden, 2015; Belluz and Hoffman, 2015; Nuzzo, 2015; Gobry, 2016; Wilson, 2016). A troubling level of unethical activity, outright faking of peer review and retractions, has been revealed, which likely represents just a small portion of the total, given the high cost of exposing, disclosing, or acknowledging scientific misconduct (Marcus and Oransky, 2015; Retraction Watch, 2015a; BBC, 2016; Borman, 2016). Warnings of systemic problems go back to at least 1991, when NSF Director Walter E. Massey noted that the size, complexity, and increased interdisciplinary nature of research in the face of growing competition was making science and engineering "more vulnerable to falsehoods" (The New York Times, 1991).

Misconduct is not limited to academic researchers. Federal agencies are also subject to perverse incentives and hypercompetition, giving rise to a new phenomenon of institutional scientific research misconduct (Lewis, 2014; Edwards, 2016). Recent exemplars uncovered by the first author in the Flint and Washington D.C. drinking water crises include "scientifically indefensible" reports by the U.S. Centers for Disease Control and Prevention (U.S. Centers for Disease Control and Prevention, 2004; U.S. House Committee on Science and Technology, 2010), reports based on nonexistent data published by the U.S. EPA and their consultants in industry journals (Reiber and Dufresne, 2006; Boyd et al., 2012; Edwards, 2012; Retraction Watch, 2015b; U.S. Congress House Committee on Oversight and Government Reform, 2016), and silencing of whistleblowers in EPA (Coleman-Adebayo, 2011; Lewis, 2014; U.S. Congress House Committee on Oversight and Government Reform, 2015). This problem is likely to increase as agencies increasingly compete with each other for reduced discretionary funding. It also raises legitimate and disturbing questions as to whether accepting research funding from federal agencies is inherently ethical or not-modern agencies clearly have conflicts similar to those that are accepted and well understood for industry research sponsors. Given the mistaken presumption of research neutrality by federal funding agencies (Oreskes and Conway, 2010), the dangers of institutional research misconduct to society may outweigh those of industry-sponsored research (Edwards, 2014).

A "trampling of the scientific ethos" witnessed in areas as diverse as climate science and galvanic corrosion undermines the "credibility of everyone in science" (Bedeian et al., 2010; Oreskes and Conway, 2010; Edwards, 2012; Leiserowitz et al., 2012; The Economist, 2013; BBC, 2016). The Economist recently highlighted the prevalence of shoddy and nonreproducible modern scientific research and its high financial cost to society-posing an open question as to whether modern science was trustworthy, while calling upon science to reform itself (The Economist, 2013). And, while there are hopes that some problems could be reduced by practices that include open data, open access, postpublication peer review, metastudies, and efforts to reproduce landmark studies, these can only partly compensate for the high error rates in modern science arising from individual and institutional perverse incentives (Fig. 1).

The high costs of research misconduct

The National Science Foundation defines research misconduct as intentional "fabrication, falsification, or plagiarism in proposing, performing, or reviewing research, or in reporting research results" (Steneck, 2007; Fischer, 2011). Nationally, the percentage of guilty respondents in research misconduct cases investigated by the Department of Health and Human Services (includes NIH) and NSF ranges from 20% to 33% (U.S. Department of Health and Human Services, 2013; Kroll, 2015, pers. comm.). Direct costs of handling each research misconduct case are $525,000, and over $110 million are incurred annually for all such cases at the institutional level in the U.S (Michalek, et al., 2010). A total of 291 articles retracted due to misconduct during 1992-2012 accounted for $58 M in direct funding from the NIH, which is less than 1% of the agency's budget during this period, but each retracted article accounted for about $400,000 in direct costs (Stern et al., 2014).

Obviously, incidence of undetected misconduct is some multiple of the cases judged as such each year, and the true incidence is difficult to predict. A comprehensive meta-analysis of research misconduct surveys between 1987 and 2008 indicated that 1 in 50 scientists admitted to committing misconduct (fabrication, falsification, and/or modifying data) at least once and 14% knew of colleagues who had done so (Fanelli, 2009). These numbers are likely an underestimate considering the sensitivity of the questions asked, low response rates, and the Muhammad Ali effect (a self-serving bias where people perceive themselves as more honest than their peers) (Allison et al., 1989). Indeed, delving deeper, 34% of researchers self-reported that they have engaged in "questionable research practices," including "dropping data points on a gut feeling" and "changing the design, methodology, and results of a study in response to pressures from a funding source," whereas 72% of those surveyed knew of colleagues who had done so (Fanelli, 2009). One study included in Fanelli's meta-analysis looked at rates of exposure to misconduct for 2,000 doctoral students and 2,000 faculty from the 99 largest graduate departments of chemistry, civil engineering, microbiology, and sociology, and found between 6 and 8% of both students and faculty had direct knowledge of faculty falsifying data (Swazey et al., 1993).

In life science and biomedical research, the percentage of scientific articles retracted has increased 10-fold since 1975, and 67% were due to misconduct (Fang et al., 2012). Various hypotheses are proposed for this increase, including "lure of the luxury journal," "pathological publishing," prevalent misconduct policies, academic culture, career stage, and perverse incentives (Martinson et al., 2009; Harding et al., 2012; Laduke, 2013; Schekman, 2013; Buela-Casal, 2014; Fanelli et al., 2015; Marcus and Oransky, 2015; Sarewitz, 2016). Nature recently declared that "pretending research misconduct does not happen is no longer an option" (Nature, 2015).

Academia and science are expected to be self-policing and self-correcting. However, based on our experiences, we believe there are incentives throughout the system that induce all stakeholders to "pretend misconduct does not happen." Science has never developed a clear system for reporting, investigating, or dealing with allegations of research misconduct, and those individuals who do attempt to police behavior are likely to be frustrated and suffer severe negative professional repercussions (Macilwain, 1997; Kevles, 2000; Denworth, 2008). Academics largely operate on an unenforceable and unwritten honor system, in relation to what is considered fair in reporting research, grant writing practices, and "selling" research ideas, and there is serious doubt as to whether science as a whole can actually be considered self-correcting (Stroebe et al., 2012). While there are exceptional cases where individuals have provided a reality check on overhyped research press releases in areas deemed potentially transformative (e.g., Eisen, 2010-2015; New Scientist, 2016), limitations of hot research sectors are more often downplayed or ignored. Because every modern scientific mania also creates a quantitative metric windfall for participants and there are few consequences for those responsible after a science bubble finally pops, the only true check on pathological science and a misallocation of resources is the unwritten honor system (Langmuir et al., 1953).

If nothing is done, we will create a corrupt academic culture

The modern academic research enterprise, dubbed a "Ponzi Scheme" by The Economist, created the existing perverse incentive system, which would have been almost inconceivable to academics of 30-50 years ago (The Economist, 2010). We believe that this creation is a threat to the future of science, and unless immediate action is taken, we run the risk of "normalization of corruption" (Ashforth and Anand, 2003), creating a corrupt professional culture akin to that recently revealed in professional cycling or in the Atlanta school cheating scandal.

To review, for the 7 years Lance Armstrong won the Tour de France (1999-2005), 20 out of 21 podium finishers (including Armstrong) were directly tied to doping through admissions, sanctions, public investigations, or failing blood tests. Entire teams cheated together because of a "win-at-all cost culture" that was created and sustained over time because there was no alternative in sight (U.S. ADA, 2012; Rose and Fisher, 2013; Saraceno, 2013). Numerous warning signs were ignored, and a retrospective analysis indicates that more than half of all Tour de France winners since 1980 had either been tested positive for or confessed to doping (Mulvey, 2012). The resultant "culture of doping" put clean athletes under suspicion (CIRC, 2015; Dimeo, 2015) and ultimately brought worldwide disrepute to the sport.

Likewise, the Atlanta Public Schools (APS) scandal provides another example of a perverse incentive system run to its logical conclusion, but in an educational setting. Twelve former APS employees were sent to prison and dozens faced ethics sanctions for falsifying students' results on state-standardized tests. The well-intentioned quantitative test results became high stakes to the APS employees, because the law "trigger[s] serious consequences for students (like grade promotion and graduation); schools (extra resources, reorganization, or closure); districts (potential loss of federal funds), and school employees (bonuses, demotion, poor evaluations, or firing)" (Kamenetz, 2015). The APS employees betrayed their stated mission of creating a "caring culture of trust and collaboration [where] every student will graduate ready for college and career," and participated in creating the illusion of a "high-performing school district" (APS, 2016). Clearly, perverse incentives can encourage unethical behavior to manipulate quantitative metrics, even in an institution where the sole goal was to educate children.

An uncontrolled perverse incentive system can create a climate in which participants feel they must cheat to compete, whether it is academia (individual or institutional level) or professional sports. While procycling was ultimately discredited and its rewards were not properly distributed to ethical participants, in science, the loss of altruistic actors and trust, and risk of direct harm to the public and the planet raise the dangers immeasurably.

What Kind of Profession Are We Creating for the Next Generation of Academics?

So I have just one wish for you-the good luck to be somewhere where you are free to maintain the kind of integrity I have described, and where you do not feel forced by a need to maintain your position in the organization, or financial support, or so on, to lose your integrity. May you have that freedom-Richard Feynman, Nobel laureate (Feynman, 1974)The culture of academia has undergone dramatic change in the last few decades-quite a bit of it has been for the better. Problems with diversity, work-life balance, funding, efficient teaching, public outreach, and engagement have been recognized and partly addressed.

As stewards of the profession, we should continually consider whether our collective actions will leave our field in a state that is better or worse than when we entered it. While factors such as state and federal funding levels are largely beyond our control, we are not powerless and passive actors. Problems with perverse incentives and hypercompetition could be addressed by the following:

- The scope of the problem must be better understood, by systematically mining the experiences and perceptions held by academics in STEM fields, through a comprehensive survey of high-achieving graduate students and researchers.

- The National Science Foundation should commission a panel of economists and social scientists with expertise in perverse incentives, to collect and review input from all levels of academia, including retired National Academy members and distinguished STEM scholars. The panel could also develop a list of "best practices" to guide evaluation of candidates for hiring and promotion, from a long-term perspective of promoting science in the public interest and for the public good, and maintain academia as a desirable career path for altruistic ethical actors.

- Rather than pretending that the problem of research misconduct does not exist, science and engineering students should receive instruction on these subjects at both the undergraduate and graduate levels. Instruction should include a review of real world pressures, incentives, and stresses that can increase the likelihood of research misconduct.

- Beyond conventional goals of achieving quantitative metrics, a PhD program should also be viewed as an exercise in building character, with some emphasis on the ideal of practicing science as service to humanity (Huber, 2014).

- Universities need to reduce perverse incentives and uphold research misconduct policies that discourage unethical behavior.

Comment: See also: Some of the biggest problems facing science