Approximately one year ago, I

wrote about the Bangladesh mask study - the only randomised control trial of community masking since the start of the pandemic. (The well-known Danish

study examined the effect of masking on the wearer; not the effect of community masking on community-level outcomes.)

While the Bangladesh study did find significant differences between the treatment and control arms, I described it as a "missed opportunity". That's because it wasn't an RCT of mask-wearing per se, but rather of mask promotion campaigns. And the latter may influence transmission through mechanisms other than mask-wearing - such as by changing people's behaviour.

Now there's a new

critique out claiming the study's original conclusions may be wrong. It's a bit technical - let me explain.

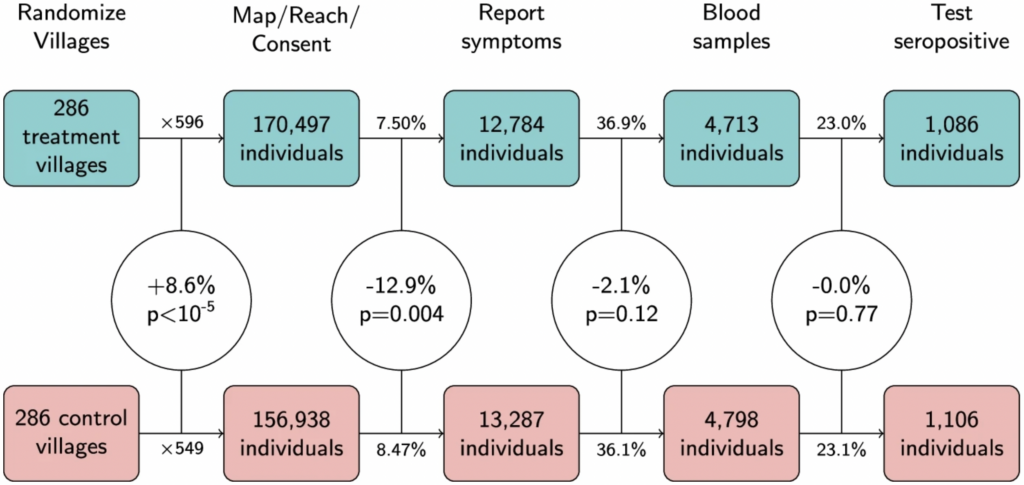

In a typical RCT: 100 patients get the drug, another 100 get a placebo, and you compare how many in each group get sick. But the Bangladesh study had a much more complex design - as shown in the figure below.

Experimental design of the Bangladesh mask study.

Headings across the top represent different stages of the experiment: first villages were randomized; then individuals within those villages were sought for consent; then they reported their symptoms etc.

The main thing to notice is that there was a statistically significant 8.6% difference between the treatment and control arms in the number of people who agreed to take part - 170,497 versus 156,938. (For those well-versed in stats, the corresponding p-value was less than 0.00001.) This isn't supposed to happen in an RCT.

As noted by Maria Chikina and colleagues, who wrote the critique, this difference arose because the staff who went out to the villages and sought people's consent were unblinded (i.e., they knew whether they were visiting a treatment or a control village). As a consequence, those in the treatment arm behaved slightly differently from those in the control arm.

Crucially, the 8.6% difference is as large as the main result of the original study: seroprevalence in the treatment arm was 8.7% lower than in the control arm. This means the main result could be due to factors other than the experimental treatment - even though the study was technically an RCT.

In fact, when Chikina and colleagues calculated the difference in seroprevalence measured as a count rather than as a rate - i.e., by comparing the absolute number of cases in each arm, while ignoring the denominator - they found it was only 1.8%. And you could argue this is the "correct" way to calculate the difference, given that randomisation of the denominators failed.Overall, the new critique doesn't prove the original conclusions are wrong.

But it does show the data are consistent with mask promotion campaigns having had zero causal influence on the outcome variables

It makes sense that a face diaper might work, a valid hypothesis, but they don't and never will.

Surgical masks during surgery also don't make a difference in post op infection rate. There was at least one study showing that too.

End the madness.