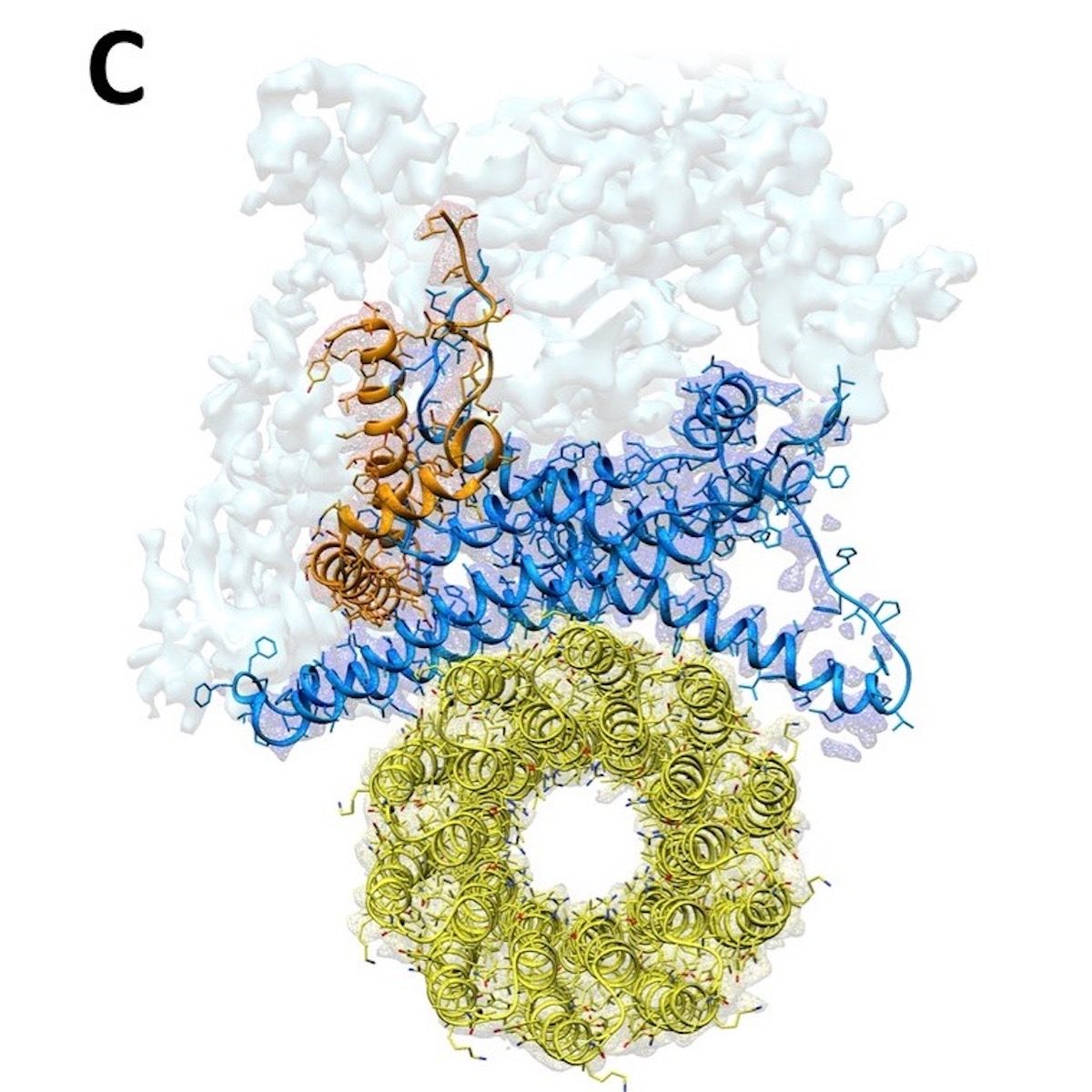

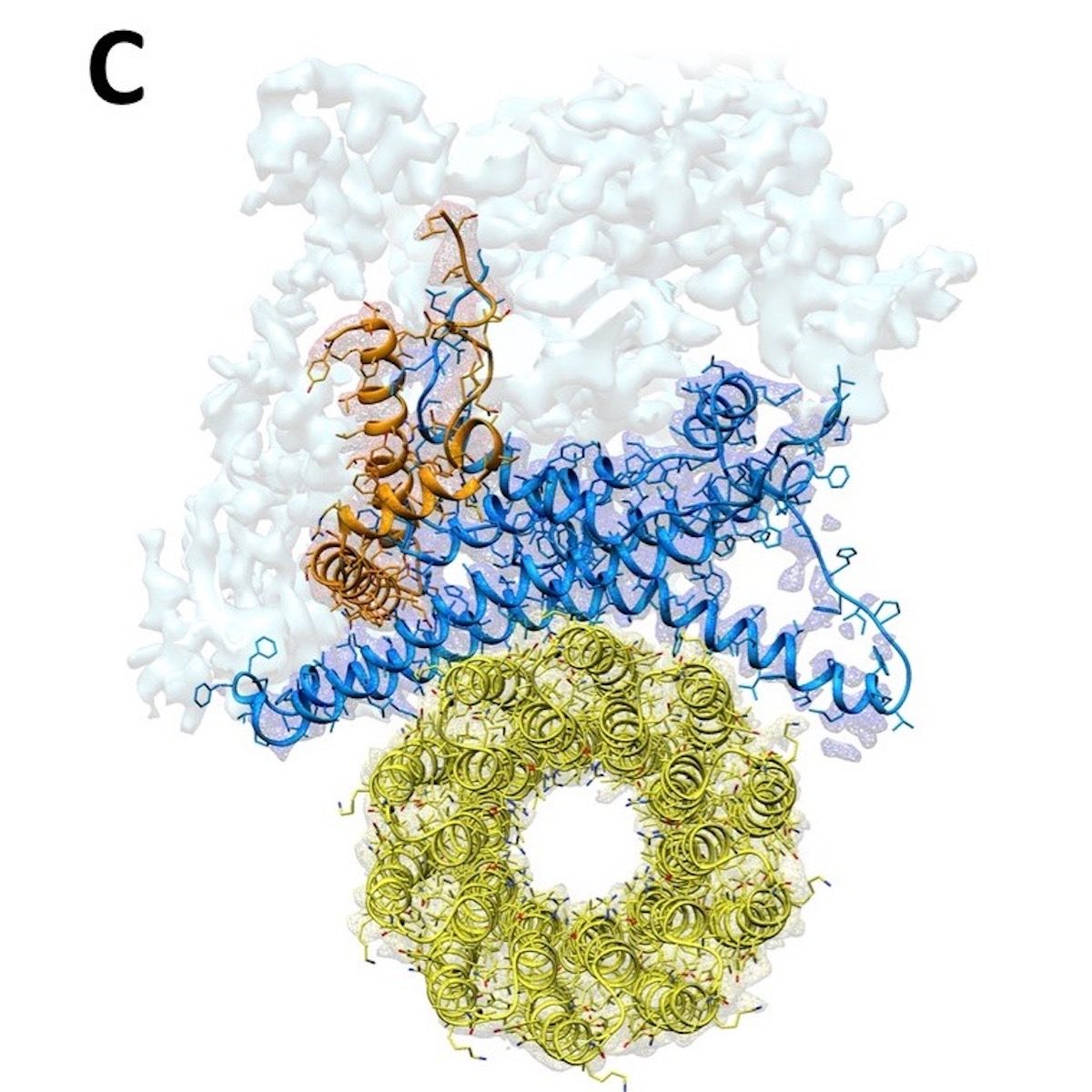

© eLife, Creative Commons Attribution license

ATP synthase is also referred to as complex V of the respiratory chain, a series of protein complexes in the membrane of mitochondria. This respiratory chain creates a proton gradient, which the ATP synthase uses to make ATP. Previously, Sazanov was the first to solve the protein structure of bacterial complex I, and the first to solve the structure of a mammalian complex I. In the new study, Sazanov and lab members Gergely Pinke and Long Zhou turned to mammalian complex V, the final unsolved structure in the mammalian respiratory chain. "F1Fo-ATP synthase is one of the most important enzymes on Earth. It provides energy for most life forms, including us humans, but until now, we didn't know fully how it works," explains Sazanov.

Rotation muddies the pictureAs the structure of the mushroom-like F1 soluble domain is known already, Sazanov and his team looked particularly at the Fo domain, embedded in the mitochondrial membrane. Here, protons are translocated at the interface between the so-called c ring, a ring made up of identical protein subunits, and the rest of Fo. Protons are moved across the membrane as each c subunit picks up a proton on one side of the membrane, rotates with the ring, and releases the proton on the other side. This c-ring is attached to the central shaft of F1 and its rotation generates ATP within F1.

To solve the structure of the Fo domain and the entire complex, the researchers studied the enzyme from sheep mitochondria using cryo-electron microscopy. And here, ATP synthase poses a special problem: because it rotates, ATP synthase can stop in three main positions, as well as in substates. "It is very difficult to distinguish between these positions, attributing a structure to each position ATP synthase can take. But we managed to solve this computationally to build the first complete structure of the enzyme," Sazanov adds.

Comment: