The prefrontal cortex then kicked in to interpret the meaning, followed by activation of the motor cortex in preparation for a response.

During the half-second between stimulus and response, the prefrontal cortex remained active to coordinate all the other brain areas.

For a particularly hard task, like determining the antonym of a word, the brain required several seconds to respond, during which the prefrontal cortex recruited other areas of the brain, including presumably memory networks not actually visible.

Only then did the prefrontal cortex hand off to the motor cortex to generate a spoken response. The quicker the brain's handoff, the faster people responded.

Interestingly, the team found that the brain began to prepare the motor areas to respond very early, during initial stimulus presentation, suggesting that we get ready to respond even before we know what the response will be.

"This might explain why people sometimes say things before they think," Dr. Shestyuk noted.

The scientists used a technique called electrocorticograhy (ECoG), which records from several hundred electrodes placed on the brain surface and detects activity in the thin outer region, the cortex, where thinking occurs.

The study employed 16 epilepsy patients who agreed to participate in experiments while undergoing epilepsy surgery.

"This is the first step in looking at how people think and how people come up with different decisions; how people basically behave," Dr. Shestyuk said.

"We are trying to look at that little window of time between when things happen in the environment and us behaving in response to it."

Once the electrodes were placed on the brains of each patient, the researchers conducted a series of eight tasks that included visual and auditory stimuli.

The tasks ranged from simple, such as repeating a word or identifying the gender of a face or a voice, to complex, such as determining a facial emotion, uttering the antonym of a word or assessing whether an adjective describes the patient's personality.

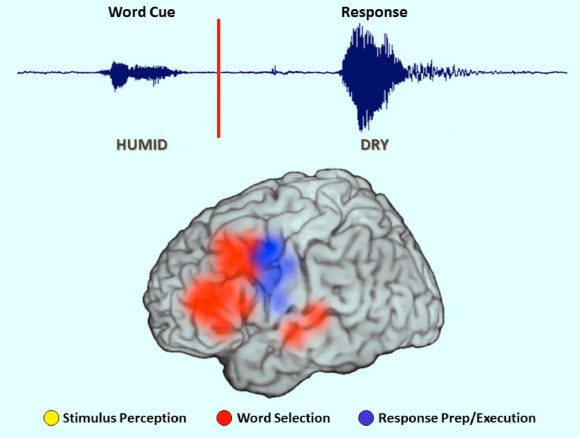

During these tasks, the brain showed four different types of neural activity.

Initially, sensory areas of the auditory and visual cortex activate to process audible or visual cues. Subsequently, areas primarily in the sensory and prefrontal cortices activate to extract the meaning of the stimulus.

The prefrontal cortex is continuously active throughout these processes, coordinating input from different areas of the brain.

Finally, the prefrontal cortex stands down as the motor cortex activates to generate a spoken response or an action, such as pushing a button.

"This persistent activity, primarily seen in the prefrontal cortex, is a multitasking activity," Dr. Shestyuk said.

"fMRI studies often find that when a task gets progressively harder, we see more activity in the brain, and the prefrontal cortex in particular. Here, we are able to see that this is not because the neurons are working really, really hard and firing all the time, but rather, more areas of the cortex are getting recruited."

_____

Matar Haller et al. 2018. Persistent neuronal activity in human prefrontal cortex links perception and action. Nature Human Behaviour 2: 80-91; doi: 10.1038/s41562-017-0267-2

Comment: See also: