You may remember that back in September, Google announced that its Neural Machine Translation system had gone live. It uses deep learning to produce better, more natural translations between languages. Cool!

Following on this success, GNMT's creators were curious about something. If you teach the translation system to translate English to Korean and vice versa, and also English to Japanese and vice versa... could it translate Korean to Japanese, without resorting to English as a bridge between them? They made this helpful gif to illustrate the idea of what they call "zero-shot translation" (it's the orange one):

Slide bars to see whole diagram.

As it turns out — yes! It produces "reasonable" translations between two languages that it has not explicitly linked in any way. Remember, no English allowed. But this raised a second question. If the computer is able to make connections between concepts and words that have not been formally linked... does that mean that the computer has formed a concept of shared meaning for those words, meaning at a deeper level than simply that one word or phrase is the equivalent of another?

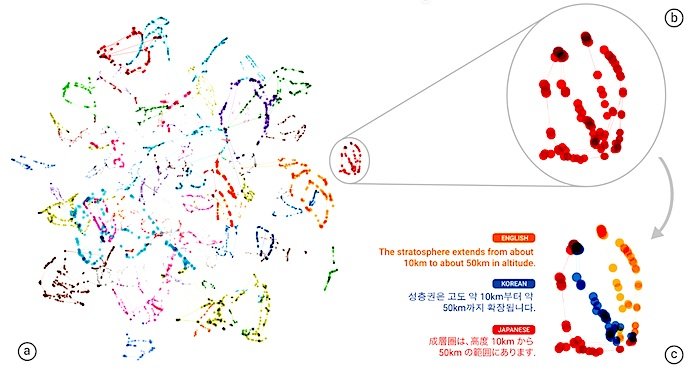

In other words, has the computer developed its own internal language to represent the concepts it uses to translate between other languages? Based on how various sentences are related to one another in the memory space of the neural network, Google's language and AI boffins think that it has.

This "interlingua" seems to exist as a deeper level of representation that sees similarities between a sentence or word in all three languages. Beyond that, it's hard to say, since the inner processes of complex neural networks are infamously difficult to describe.

It could be something sophisticated, or it could be something simple. But the fact that it exists at all — an original creation of the system's own to aid in its understanding of concepts it has not been trained to understand — is, philosophically speaking, pretty powerful stuff.

The paper describing the researchers' work (primarily on efficient multi-language translation but touching on the mysterious interlingua) can be read at Arxiv. No doubt the question of deeper concepts being created and employed by the system will warrant further investigation. Until then, let's assume the worst.

Comment: Interesting (amazing? disturbing?) developments in the mechanics of this self-thinking-linking 'neural' translator, a possible precursor to a self-evolving machine. Given Google's recent political bias and governmental cooperation...where might 'this' go from here? (In science fiction, 'this' never seems to turn out well! Then again, Star Trek had its handy dandy universal translator gizmo, without which the series would have lasted one season.)